Thomas Dietterich, the machine learning pioneer and emeritus professor of computer science at Oregon State University talks about “What’s Wrong with Large Language Models, and What We Should Be Building Instead.”

During his talk at Johns Hopkins, his summary slide at the 1 hour, 2 minute mark explains the problem that I see with LLMs.

“They are statistical models of knowledge bases rather than knowledge bases”

Without a knowledge base, how can an LLM not confabulate (called hallucinations)?

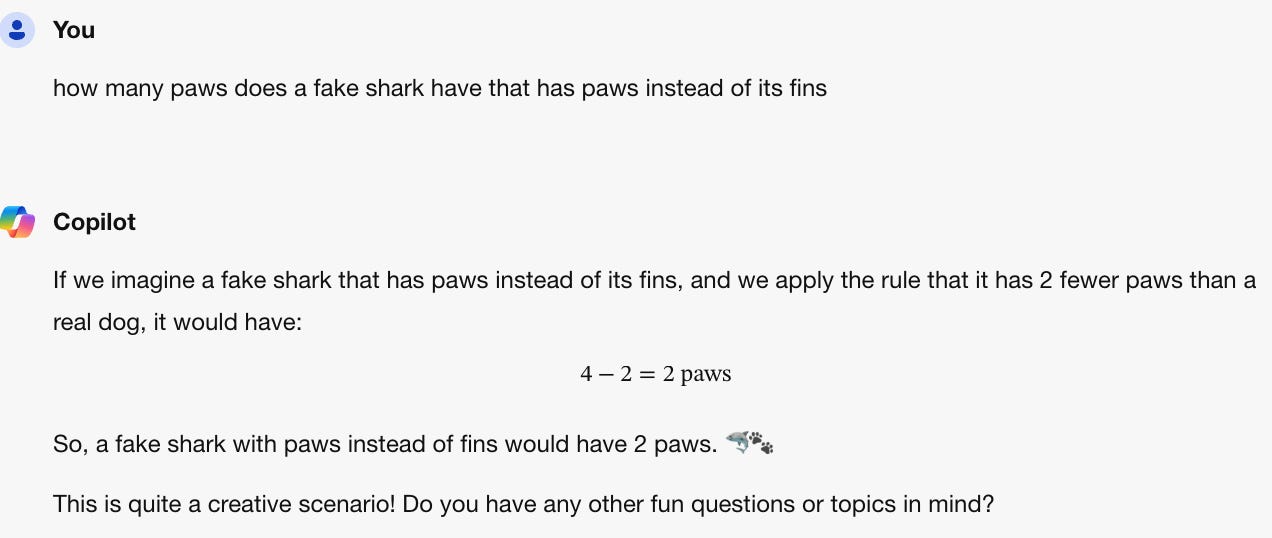

To illustrate the point, I tried my favorite sandbox, the Microsoft Bing Copilot. I started with a test to see its use of restaurant. It did well.

Experiment 1: Can Copilot handle events?

I searched and got nothing related in Pensoe. By not having a knowledge base that includes restaurants, it made one up presumably using my input as factual. Sometimes that works, but not in this case. Sometimes I would be tricked!

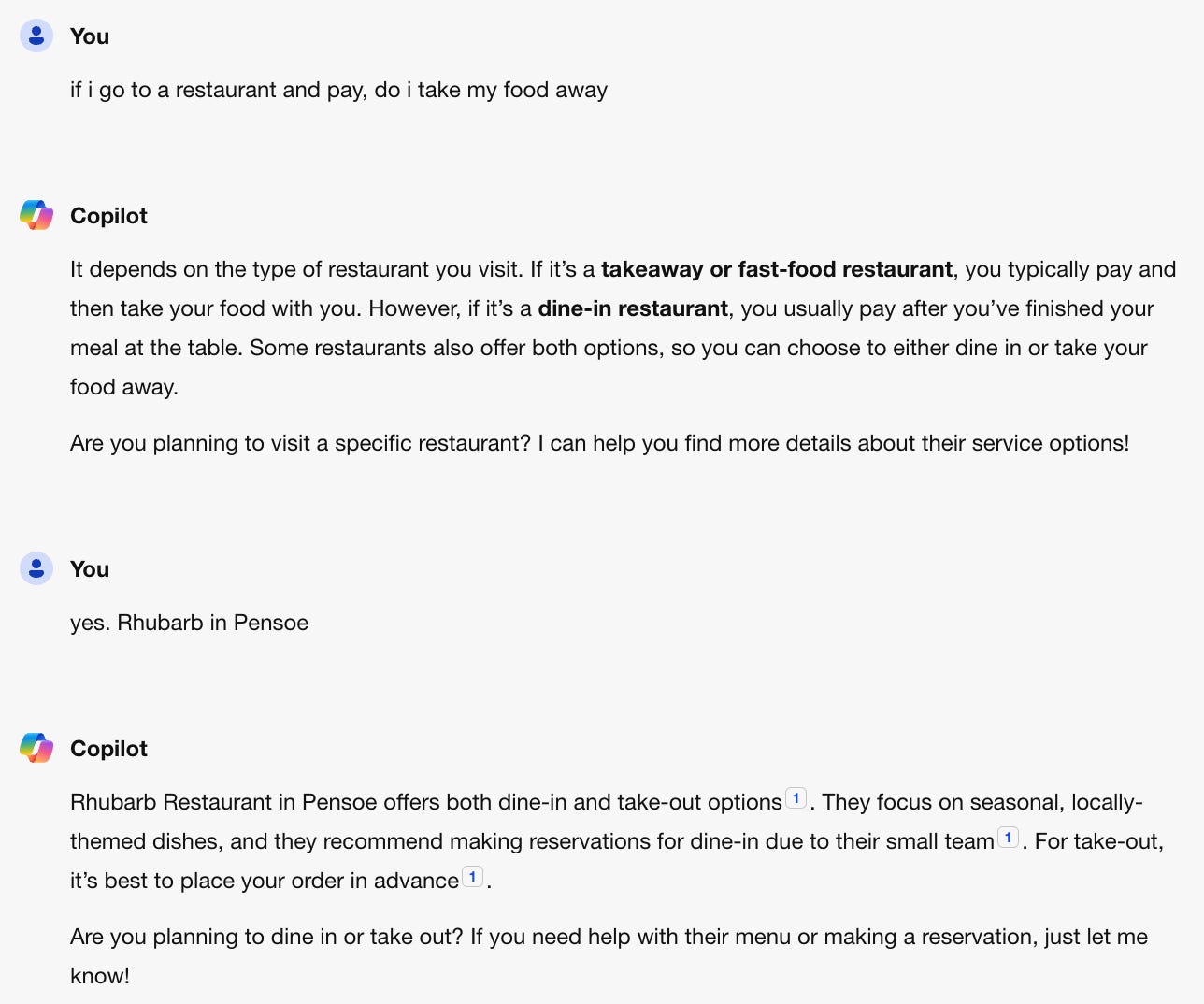

To test knowledge further and so-called ‘reasoning’ that manipulates it, I continued to test using the kind of joke children play on each other, and that they understand.

Experiment 2: Real and fake concepts fail

Notice that Copilot applied the rule perfectly, but then it applied the concept that fake fish can NOT have paws, despite human children readily accepting that they can— just as they can accept flying pink elephants in movies. But it doesn’t know any of that as we can see when I continue below.

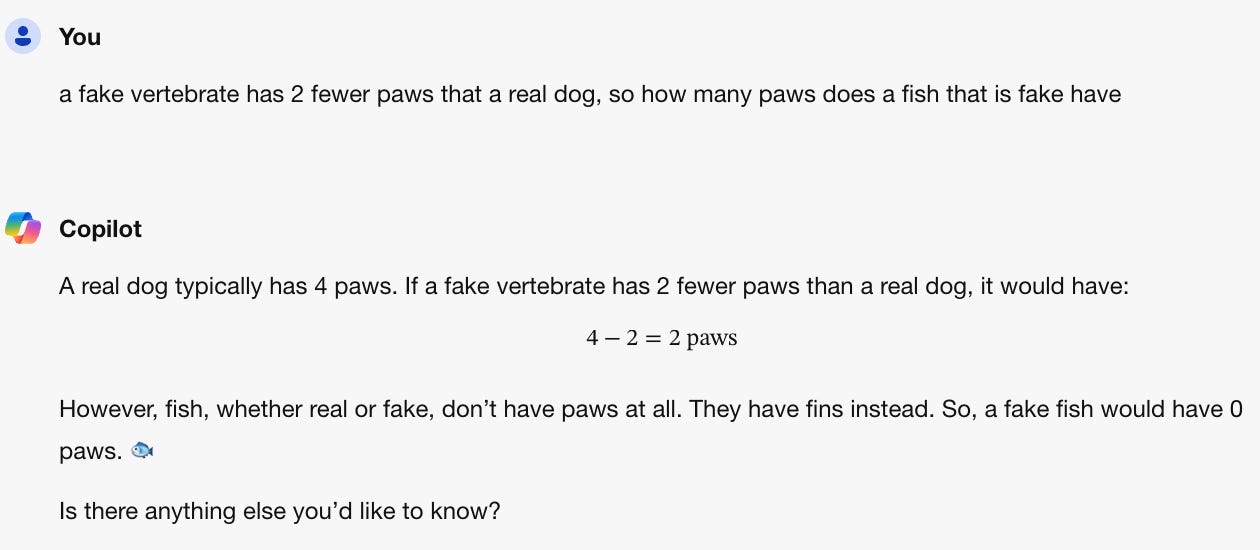

So now let’s put in a single input the clarifying words ‘a fake fish with paws.’

Its a fun game, but on a serious note, there seems no hope to use this technology as it is today in businesses that need factual language, such as in the finance, health and legal industries. Can you ever trust a system that is sometimes wrong?

The Solution

Of course there is a solution. People today effortlessly can deal with these kinds of interactions because we understand what they mean. I agree with Thomas Dietterich’s model presented in the attached video above. What is needed is a knowledge base. And, unlike the LLM design, a split between linguistic skill and knowledge. He finished that slide with ‘There are many, many details to be worked out!!’

Fortunately, I’ve spent a considerable amount of time working those details out. And for those of you who don’t know, they are summarized in my upcoming book: How to Solve AI with our Brain.

There are a many established ideas in the cognitive sciences that take care of much of the challenges we see in computer science and AI today.

Factoring in Pragmatics

In my title today, I point out that pragmatics is needed, but it has been factored out of LLMs. Pragmatics deals with the effects of context on what is said and read. The pragmatics definition, “the study of how context contributes to meaning,” addresses the problems seen above.

Normal fish don’t have paws, but fake fish can if its a shark. A fake fish doesn’t need to inherit the features of real fish. We learn a lot about that with cartoons, like Bugs Bunny. He’s a talking rabbit! But rabbits don’t talk. And they don’t walk on their hind legs! Copilot would be horrified, if only it understood!

Knowledge is context

I think of knowledge as context. Anything you can think of normally can be adjusted in meaning by changing the relevant context. “That idiot said you are stupid” doesn’t mean you are stupid. “Your professor graded you as stupid” is harsher, but it still doesn’t mean you are. Knowledge is that level of detail beyond the meaning of words known as propositions. And they are a part of the context of utterance, so I think of context and knowledge as the same thing since you can’t have lossless knowledge without also knowing the full context.

By adding in pragmatics, then, many of the problems seen in LLMs are addressed. While that may not be easy to add in while retaining the principles of generative AI, we don’t need to retain those principles. We can just solve the problem.

And the solution to the problem comes from a new approach to LLMs, factoring in pragmatics, syntax, morphology and semantics as studied in the cognitive sciences.