Thinking about a Thought Experiment

What would John Searle or Alan Turing say?

American philosopher, John Searle, published a thought experiment about the difference between the appearance of understanding and actual understanding—the so-called Chinese Room Argument. It was made a long time ago in the 1980s.

We can contrast what we know today in the cognitive sciences to improve its interpretation while trying to channel the late Alan Turing or mind-meld remotely with John Searle.

The argument applies equally well to today’s AI approach known as large language models (LLMs) that in some respects appears to understand what it is doing due to the responses it makes. Humans tend to anthropomorphize what they experience.

By looking at the Chinese room, Searle makes an important point about natural language understanding (NLU) that has been my area of work for many years now.

To me, the argument boils down to why formal linguistics such as with rules-based parsers have never been able to recognize human language accurately.

The Thought Experiment

In the referenced Stanford Encyclopedia article above, the details of the thought experiment are well summarized, but let’s go through it ourselves. Those who are familiar with the Turing test will see the similarities of the argument in which someone is behind a door and communicated with using typed messages!

Imagine you’re in a room with a pile of cards. You don’t understand Chinese, but there is a procedure to follow with the cards when you receive input symbols that you also do not understand. Some input is received, and following your instructions, you assemble the cards to produce a response.

Even though the symbols are meaningless to you, you follow the procedure and respond according to your instructions. Clearly you don’t understand what the messages say as the symbols are meaningless to you, but to a Chinese person on the outside, Chinese language is being put in, and Chinese responses are being returned.

There’s the contrast to understanding. You don’t understand the Chinese, but the outside world assumes you do as the controller inside the room. The outside world can laugh at your ‘jokes’ even though you have no idea that the procedure produces a joke.

I’ll leave the rest of the philosophical interactions Searle dealt with because we can look at this problem in light of modern linguistics, such as that taught in Role and Reference Grammar (RRG). We can also look at the lack of progress to reach human-level language emulation in our machines since the 1980s.

Linguistics

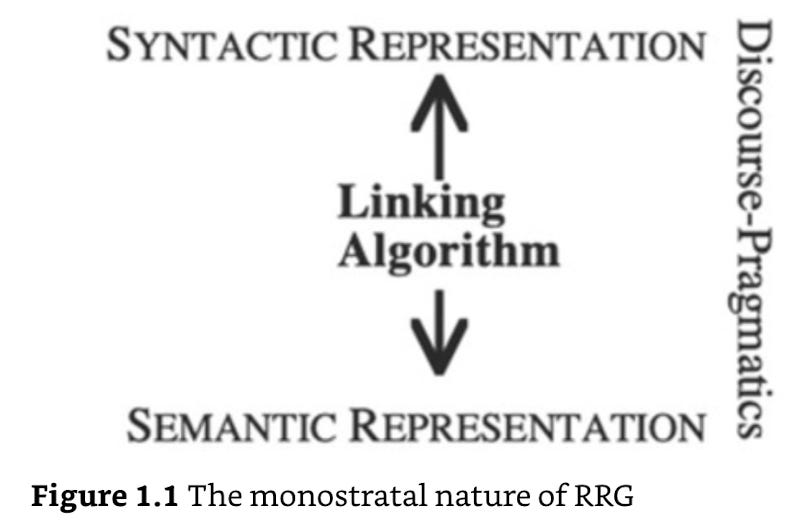

In RRG, language is made up of three ingredients — syntax, semantics and pragmatics (such as context) as illustrated in the Cambridge Handbook of Role and Reference Grammar:

I thought I’d leave in the figure reference since if you’re like me, you’ll wonder what monostral means!

“…RRG is a monostratal theory, in that it posits a single syntactic representation for a sentence; there are no syntactic derivations of any kind. Rather, there is a direct mapping between the syntactic representation and the semantic representation by the RRG linking algorithm.”

Now we can return to the Chinese room. When we get a set of characters, RRG says we need to map them to their semantic representation (their meaning). In other words, in human language, we understand what the words mean and by finding the respective syntax, we understand the meaning of the sentence(s).

As the Room is emulating a conversation, by not understanding the characters or phrases, it doesn’t understand like a person does. Semantics and context is missing from the Chinese Room model!

Why does formal linguistics and the rules-based approach struggle? The rules-based model factors out meaning and context! Why? That’s the theory! And, anyway, an alternative appears much more complex at first glance.

Today’s AI World

In today’s world, this is equivalent to an LLM in which text is put in, a black box creates statistical word sequences based on training data or other interventions like RAG, and a response is returned as sequential text. At no stage does the LLM consider humor as a person would understand it, nor what the words mean, nor who else used those words, what else was said in previous discussions and why.

It is easy to antropomorphize that something human-like is behind it, but without meaning and context (or syntax, semantics and pragmatics), the text returned isn’t like a human being’s response.

By factoring out meaning and context in AI tools, they are just programs as in Searle’s Chinese room, following a program to generate a response without understanding.

Winding up: “Still, isn’t the Room Intelligent?”

The room certainly appears intelligent. And let’s face it, communicating with any input from a person and emulating someone correctly is the Turing test.

In Alan Turing’s 1950s test published in Mind, he considered a machine that can respond like the 1980s Chinese Room thought experiment to be a machine that thinks. By his definition, the machine passes the “Imitation Game.” What ‘think’ means, if anything, can be left for another day but Turing argues that a machine that can think is intelligent. That intelligence is a synonym for being like a person because it must have comparable cognitive capacity.

And that’s the point!

While Searle proposes a hypothetical procedure to manipulate symbols, we know today that the creation of such a procedure is difficult — no, impossible. That’s measured by progress: no machine has ever emulated human conversation across the range of possible topics as a human being could do. It didn’t work with 2015 chatbots, nor hallucinating, confabulating 2024 LLMs.

To create a procedure for a Chinese Room, the best way would be by emulating linguistics, such as RRG, and bringing in semantics and context. It would be a breakthrough, but one in which the current system is discarded.

Such a system would understand what it is doing, as a person would since its use of semantics and context forms the basis for human cognition. Without those building blocks of human capability, we just emulate the Chinese room that does not understand.

Excluding such elements has been the downfall of AI to this day. Those omissions lead us to the famous quote attributed to Albert Einstein:

“Everything should be made as simple as possible, but not simpler.”

Did John Searle and Alan Turing ever meet? Did they ever have an actual conversation about AI?

Here's an idea: Setup 2 chatGPT sessions, tell one to mimic itself as Alan Turing and the other to mimic itself a John Searle, then create a mock debate between them on their competing theories.

I think the result would be quite entraining (possibly even important)