What is the Frame Problem? The AI Frame problem emerged from the early days of AI and spawned systems such as FrameNet and caused a focus on statistical solutions to AI. Most of today’s problems in AI are tied up with it.

As IBM’s Fred Jelinek once said something like:

“Every time I fired a linguist, the quality of the speech recognizer went up.”

Jelinek won the battle for better speech-based AI by pivoting to statistics, but lost the war to obtain human-like accuracy as is needed for killer AI apps. Good enough just isn’t good enough.

A useful business principle to remember in order to progress (while trying to avoid stifling innovation):

“Perfect is the enemy of good.”

A great retort was said in the TV program about the history of Blackberry:

“Good enough is the enemy of humanity.”

In the complex goal to replicate humans, good enough isn’t good enough! Finding point solutions to problems in the absence of a general solution has led to unsolvable errors in systems as seen with speech to text system and Large Language Models (LLMs) because the scope is too narrow. We get rhyming captions, not accurate ones. The meaning of what is said is needed to communicate with humans, not historical statistics to generate a next word. Language without meaning is like math without numbers.

And meaning cannot be extracted accurately without the key elements of human language – semantics (the meanings of what is said) and pragmatics (the context of the situation that aligns the meaning with the conversation).

Fundamentally, conversation is an interchange in context (immediate common ground) with necessary elements introduced from long-term memory (general common ground). Factoring out the key elements (like context and semantics) is as bad as using the wrong scientific model.

Astronomy couldn’t be solved with the geocentric model, but Galileo brought in natural science and his telescope to justify a better model that led to improvements in the science of astronomy and its ability to predict planetary motion.

The geocentric model that Galileo helped to overcome is like formal science, starting with a set of rules like “the earth is unmoving at the center of the universe.”

We don’t want to argue against the rules in a formal science, like how converting words to vectors (numbers) that retain cosine similarity is good enough to model human language. In the case of language, the model of similarity is not good enough. But how do you prove it? Is hundred of billions of dollars in the attempt enough? Is the detection of a number of unsolvable problems in the best systems today enough?

Formal science can easily survive attacks because it doesn’t rely on observations of the world. It is just some claims. Natural science, in contrast, can be falsified with observation.

Today’s article focuses on a key challenge in AI, how to deal with the AI frame problem.

Brain limitations should guide AI

Why care about how brains work to solve AI?

We don’t need to, but since 1956 when AI was named, progress against the central AI goals such as creating human-like robots and understanding language have been unsuccessful.

When you don’t succeed, a good technique for progress is to try something else.

LLMs have fundamental limitations when used outside of their design. LLMs generate probable tokens (think, next word). They aren’t conversational engines. They cost a bomb! To gather their statistics, they can cost more than $100m USD and they burn electricity and water at alarming rates. Because they don’t solve many human problems due to their design, we could use those billions spent for better purposes: what about improving our quality of life around the world, instead?

Or we could focus on solving AI.

We even have the 1980s Moravec’s Paradox where things that people are good at are things machines can’t do, and things that machines can do well are done poorly by humans. Humans can pick up sheets of paper from the floor, fold them in half and stack them neatly on a selected surface but today’s robots cannot. Machines can rapidly memorize a phone book when given access to its entries, while most humans cannot. (We miss you Kim Peek!)

By studying and emulating human brains, we can learn what is needed to improve AI. And as computers are millions of times faster than the human brain’s building block, the neuron, improvements in computer capabilities over humans is possible in the future.

As an aside, there is a popular concept about a singularity or super intelligent machine (ASI) that can come from today’s statistical language models. If you want to predict the future, you should focus on how something works, now, not assume it will develop magical powers! Humans solve problems like cancer by learning medicine, not by learning English.

A machine that generates English is not the same as a medical doctor even if it quotes what a doctor wrote.

At another time I will look at the alien nature of human brains when compared with computers to see why the computational model is ineffective at AI today. We can still use computers to emulate humans with AI, but a simplification of how they work is needed because brains don’t have computational elements like programs, data or designers.

The AI Frame Problem

A good introduction to the AI Frame problem is in Daniel Dennett’s 1984 article, “Cognitive Wheels: The Frame Problem of AI.”

He explains 3 kinds of robots.

Robot 1 is sent into a room with a bomb to save an important object on a trolley. It brings out the object, but as the bomb was also on the trolley, the bomb blows it up!

Robot 2 is reprogrammed from robot one to check for things that might lead to problems. After it enters the room, it freezes as its computations consider all the possibilities to be careful of. And then the bomb goes off. It was too slow.

Robot 3 is reprogrammed further from robot two to only consider important things that are relevant. It confidently strides into the room, and freezes as it considers which things are relevant. Then the bomb goes off! It was still too slow.

In summary, the frame problem considers how to emulate the human ability to use common sense, rapidly! But what is common sense?

Solutions to the Frame Problem of AI

If the computers were faster, perhaps the frame problem is lessened as more can be done in the same timeframe. In the case of LLMs, a solution is to compute in parallel using GPUs, rather than find a different solution. LLMs get an answer in real time, but it cannot be trusted as it doesn’t understand what it is doing other than following computational sequences.

No, to emulate what our brains seem to do already can be simplified in a few ways:

· Better knowledge representation (fast, accurate relevance)

· Real-time processing (calculations are limited to run in the available time) and

· Learn from experience (use previous interactions to guide current actions)

The first point (knowledge representation) leads to the Fillmore and Minsky Frames, and the Schank Scripts. Fillmore’s FrameNet is available online.

Let’s come back another time to what a brain can do to solve this based on how it works in a bidirectional hierarchy of patterns (according to Patom brain theory or PT).

The second point (real-time processing) comes down to the algorithm chosen to solve the problems. On this topic, there are a few ways to think of the 80 billion or so neurons in our heads. In one case, each one can be either on or off, creating 2 to the power of 80 billion combinations.

That’s not aligned with how our brain works, of course, as neurons in our visual cortex activate based on visual tasks and those in other regions activate based on that region’s function.

Łukasz Dębowski wrote a good summary on the three statistical power laws (reference here). He asks the question (page 11): “Is natural language a finite-state process?” His answers are either:

Yes, the B.F. Skinner like argument claims billions of neurons can be in 1 of 2 states - therefore “verbal behavior can be modeled by a finite-state automaton with 2 to the power of 10 to the power 9 combinations” as estimated above.

Or no. The Chomsky like argument points out there are nested utterances of structure a to the power n times b to the power n, where n is arbitrarily large. The embedded nature of languages allows for ‘recursion’ of clauses within a sentence.

But in PT we note that the brain anatomy isn’t a collection of input neurons leading to a collection of output neurons. Instead our brain is composed of regions.

Some regions access visual input that connect to regions that recognize visual motion.

Some regions come from auditory input that connect to regions that recognize spoken word sounds.

Some regions connect to motor controls and enable rapid manipulation of large numbers of muscles in sequence, such as for speech and walking.

By removing the constraint of the formal model based on the exclusion of meaning and context, embedded phrases can be identified and resolved with meaning — effectively creating a combinatorial reduction in ambiguity. (A solution to the AI Frame Problem)

The number of different states in this machine is astronomically larger than the others as each region operates in combination with the others, effectively multiplying the numbers of permutations from each region.

Learning from experience (knowledge representation)

How does eating at McDonald’s prepare us for dining at a fine restaurant and how does it not? The steps in eating at Michelin-starred restaurants are similar to fast food, but just with different orders of steps! We sit and order before paying when dining, but McDonald’s sequence is to order, then pay, then sit.

It’s common sense.

This leads to a fascinating possibility. A brain compiles a broad list of different tasks, but re-uses in different scenarios. And instead of keeping separate scenarios, we can keep one only list and never duplicate any items. In other words the items are stored once, and the type of restaurant accesses the items it uses in the order it used them. The brain links to the pattern and uses it in context, but it doesn’t copy it.

This is like a normalized database except it involves multiple senses, motion and other interactions.

We are splitting semantics (what happens in some context) from context (what happened in total in a specific event). Like a knowledge graph built from semantics that only applies to a specific situation, not a general one. This distinction is important.

The steps in a frame are therefore just a list of possibilities some of which can be skipped, but where each item can be very specific, and yet flexible based on different experiences of re-use.

Normally we are seated in a restaurant before we order, but at McDonald’s we receive our order before we sit. The tasks are the same, but in a different order and with different details.

In technical terms, the context of each event is protected, but composed of semantic items that potentially can be modified in any example.

These observations support Patom theory because PT is designed to answer these questions.

Parsing to meaning in real time

Finding the phrases in sentences is one of the key steps in understanding language.

Languages allow composition of words and phrases with morphology and syntax. They also allow composition of literal words (a fixed expression) like “The Statue of Liberty.” ‘A statue of liberty’ is something different as one of the words was changed.

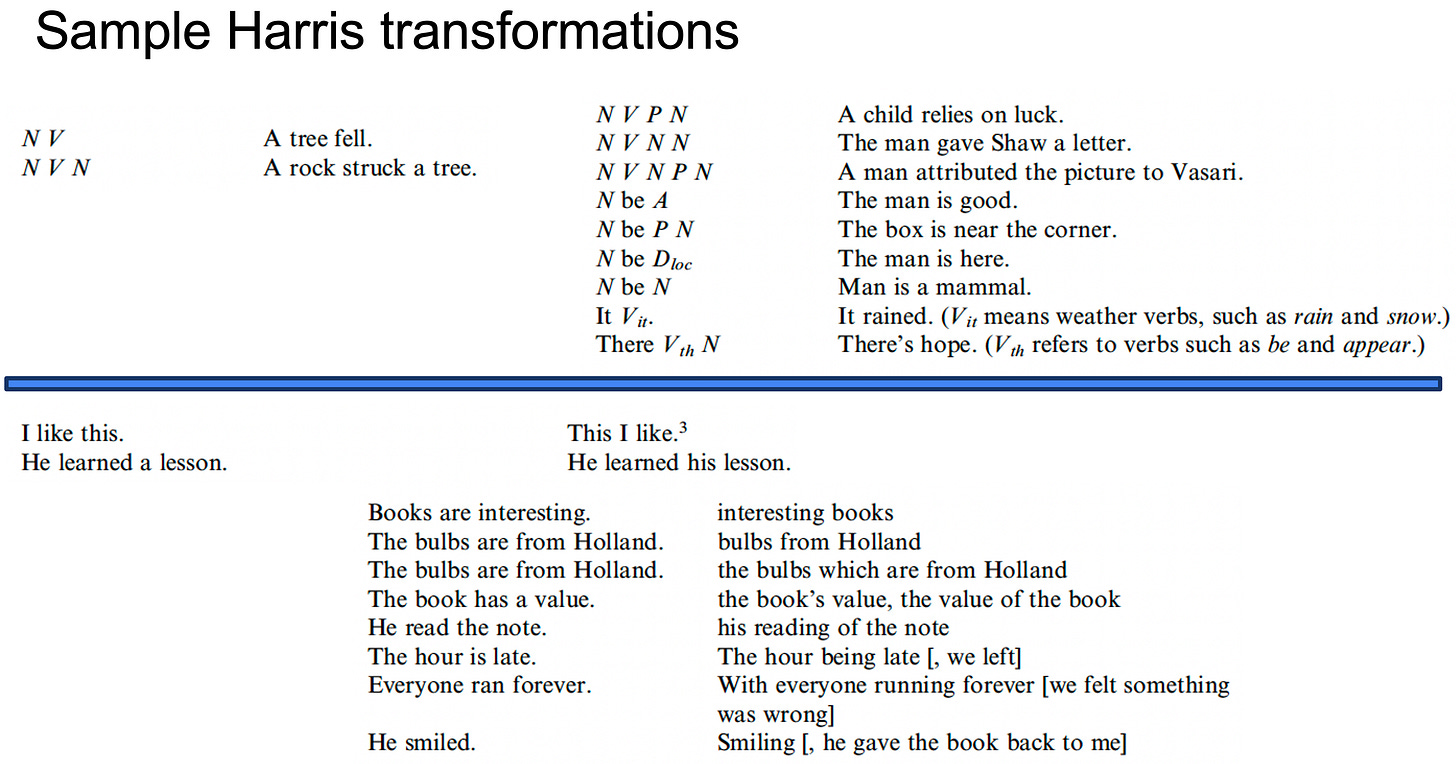

As an example of the range of phrases made possible in parts of speech (remember this creates an unsolvable combinatorial explosion of possibilities) I include the 1950s Zellig Harris extract:

Note how many sequences start with NV (noun verb)! Lists are not necessarily efficient models when we need to match their contents because the longer the candidate element is, the more matches take place prior to success or failure.

Image this as one of many: the man saw the dog the girl was fond of = N V N N be A P

An NV is quickly matched, but ensuring the full pattern of NVNPN also checks 3 more elements. Also syntax can match something that is rejected by semantics, so both steps need to be constrained in effort.

At one stage I was using sequences of an alternative model, but there were still 1200 or so combinations and they were still inadequate to deal with the real English model!

Given some thought, the trial-and-error method leads to an obvious solution to the need to test large numbers of sequences. This will enable much less powerful machines to execute in real time. Hindsight is very powerful!

Instead of 3 patterns like NV, NVN, NVP allow the decomposition of phrases into pieces. Let NV be the first patterns matched, call it H. This is only done once, not once for each phrase. Now match H, HN and HP! It doesn’t look like much, but once we start to include real-life variation, our ability to match patterns only once is a lifesaver.

This concept of decomposition introduces the pattern-atom concept. The 3 patterns are decomposed into 4 patterns, but each one is simpler and never duplicated for use in other patterns. Our inventory of patterns is atomic with only 1 pattern of its kind per system linked to the recognized version.

It matches from the inside out and is validated by meaning before continuing.

This eliminates the need for search.

Given a sample sentence like “Cats scratch dogs.” We can map it to NVN. How can we match NV? The computer model would perhaps store it in an array and test all elements of the array each time. But by linking phrases to their first constituent, it can be directly accessed and tested from N.

This is the model explained in my 2007 patent. The next simplification is the elimination of parts-of-speech in favor of semantic elements – predicates and referents. Predicates relate other things (and are nouns and verbs) while referents refer to ‘things’ (and are usually nouns, but sometimes verbs).

The swap from syntactic units (nouns, verbs, adjectives,…) to semantic units (predicates or referents) greatly reduces the number of combinations to consider; and with greater accuracy. The decomposition of phrases also greatly reduces the number of possible syntactic phrases to deal with.

In short, match parts of valid syntactic patterns and then validate or reject them with semantic selectional restrictions.

This type of work deals with the Frame problem of AI to run in real-time and using existing knowledge by reusing the same patterns only once.

Applied frame problem techniques

The popular approaches to dealing with the frame problem are computational and statistical. Algorithms with improved search or statistical compromises such as those used in deep learning and other artificial neural networks, but lossy solutions mean that we aren’t dealing at a human level, because there are countless problems people solve that machines cannot.

Do we need to create sophisticated reasoning systems? Or do the result of reasoning fall out of cognition directly? Maybe things like thinking, the mind and reasoning are just words we are taught to describe something we do.

There are systems to add knowledge to situations like the restaurant example above with what are called Frames and Scripts, but the manual effort and lack of scaling strategies suggest there will be better ways. I’m including some references to Frames below with Roger Schank’s book, “The Cognitive Computer” and Marvin Minsky’s book, “The Society of Mind.”

Assuming we need human-level emulation to align with our slow brain, how could we deal with large numbers of possible clarifications in real time without moving to the current lossy solutions and without sophisticated algorithms?

Expand the patterns

When we do something, we have more information than just the activity details. We also experience the consequences. In the case where we need to recognize syntax, we can use the previous pattern matched to index the next possible ones. That reduces the need for search if the numbers enable real-time recognition. In the case of parsing, the use of semantics to further reduce possibilities is one successful method that I have used.

In such linguistics, the phrase patterns can be learned as a result of their composition along with their valid semantic meaning. Learning is central to such skills and once a suitable semantic model is introduced, such patterns become obvious to people.

In other words, only try the patterns that can possibly be matched by indexing appropriately.

Context is involved in everything we do. Each situation includes its own context and if we can store those elements and the changes that take place, there is a way to exploit such knowledge for AI.

As long as we can recognize the current context, we can access any previous similar examples. Why? Because PT requires patterns to be unique, so previous pattern are directly linked to subsequent ones.

And in those examples, there may be actions that had a valuable result. If we only take the valuable results and then select the actions that created them, we are now implementing one of Dennett’s robots with limitations on the effort needed since similar context are likely to contain similar activities to solve current problems.

I found the ability to use the stored possible phrases in linguistics only against words or meanings that can match them a really handy optimization. That same approach can be used in other AI challenges as potential solutions to the Frame Problem.

Summary and Conclusion

Rather than compromises with lossy solutions to AI (statistics for example), perhaps robotics in general can benefit from context as a source to index or anchor possible activities and their consequences. Simply by exponentially reducing the number of potential considerations, the Frame Problem of AI can be addressed.

Additional Reading

Daniel Dennett, Cognitive Wheels: The Frame Problem of AI

Roger Schank, The Cognitive Computer: On Language, Learning and Artificial Intelligence.