How can computers solve the AI puzzle? The artificial intelligence (AI) industry has been working hard since 1956 to enable computers to emulate humans, with only limited success.

Goals like full speech interactions with people are unsolved. Driverless cars are unsolved. Interacting with AI agents or chatbots to accurately and correctly solve the wide variety of reasons people contact a company, in a selection of human languages, is unsolved. Even the proposed home robot, like Rosie from the 1960s cartoon, ‘The Jetsons,’ is missing from our lives!

This is the first article introducing a different way of thinking about AI that both

explains what an animal and human brain is doing, and

how that can be applied to vastly improve AI.

At its core, the trouble with the computational model when used as a metaphor for a brain is holding AI back. And like any ineffective model in science, we need to find a better model to help science progress.

Today’s article introduces an explanation for a way to solve AI now, using what we know in the cognitive sciences. The theoretical neuroscience of Patom theory will be applied to fill the gap between computers and brains.

The Empty Brain

The psychologist, Robert Epstein, published an article in Aeon in 2016 putting the case (link here) that:

“Your brain does not process information, retrieve knowledge or store memories. In short: your brain is not a computer.”

Epstein explains his reasoning clearly in the referenced article, so rather than dealing with all the points, I’ll just surface the key ones and leave you read the full detail separately.

Spoiler: For those who know my work, I’m a strong supporter of AI. I have computer software that converts text to meaning and uses that meaning in conversation. I’m also the theoretical neuroscientist that created Patom theory that models brains to enable that software to work. Therefore, I strongly support the case for AI - computers emulating humans - because I can demonstrate solutions to some of the unsolved problems of AI like parsing and lossless meaning representation.

Let’s consider his main points.

Baby brains

The brain of a baby enables it to survive in the world. It starts with essential capabilities: “senses, reflexes and learning mechanisms.” Reflexes are described as things babies do, like holding their breath when submerged in water, sucking things put in their mouths and grasping things put in their hands.

In Patom theory, the question of AI centers on ‘learning mechanisms.’ How do we learn what our mother looks like, sounds like and smells like? They come from different senses, and yet a baby can recognize their mother as a ‘thing’ with these properties in different senses. That’s some feat!

Let’s come back to learning (below under the Patom theory header) as we continue with Epstein’s paper.

The brain doesn’t have copies of words, pictures, songs or ‘memories’

Well, this heading is confronting. I mean, I’m pretty certain I have memories of parties I’ve been to, talks I’ve given, and people I’ve met. But reading more closely, there are not ‘copies’ of things like words.

Next, Epstein covers the things humans aren’t born with such as the range of things performed by digital computers - encoders, data, algorithms, and so on. Our brain never sorts data or information in any way, for example. We use .mp4 files and .jpg files to store movies and images on computer, respectively, but the brain doesn’t have files like that. Encoding data like that is not something a brain does.

He finishes this computer overview with:

“computers really do operate on symbolic representations of the world. They really store and retrieve. They really process. They really have physical memories. They really are guided in everything they do, without exception, by algorithms.

Humans, on the other hand, do not — never did, never will. Given this reality, why do so many scientists talk about our mental life as if we were computers?

So the literal observation is correct. When we get to his examples we can see how the brain learns, what it learns and how it remembers.

Brain Metaphors

Next, the different metaphors used for a brain were covered. They went from hydraulic engineering in the 3rd century BC, to springs and gears in the 1500s, to a telegraph-like machine in the 1800s. Since the 1940s, the existence of computers has aligned the brain metaphor with computers.

IP (Information Processing) Metaphor

“The IP metaphor of human intelligence now dominates human thinking…” he writes.

Then, “The validity of of the IP metaphor in today’s world is generally assumed without question.”

To reinforce his point, Epstein challenged top researchers to account for intelligent human behavior (my highlights below):

“without reference to any aspect of the IP metaphor. They couldn’t do it, ...(and months later)… they still had nothing to offer.”

This reminds me of the science of astronomy in which the obvious, but wrong, model that the earth is stationery while the sun and planets moved around us (geocentric model) was replaced after careful observations of the sky with one which seems wrong in which the earth and planets moved around the sun (heliocentric model). Amazingly, we don’t fall off our round, spinning planet—but that needs Newton to mathematically help us to understand what’s happening years later.

During the period of geocentric science, it was hard to persuade others that the heliocentric model is better. “Earth can’t be round and moving through space or we’d fall off! It’s obvious. Similarly, today it is hard to persuade anyone that the information processing or IP model of the brain is wrong.

“If the IP metaphor is so silly, why is it so sticky? … The IP metaphor, after all, has been guiding the writing and thinking of a large number of researchers in multiple fields for decades. At what cost?”

Using psychology to explore

How can his explanation be believed? I’ve seen top scientists claim that we know the brain is like a computer, just not the type of computer you are thinking of as it doesn’t have a disk drive or console! Don’t we?

Psychologists, a key part of the cognitive sciences, can make effective points by observing what people do.

Epstein’s experiment involves asking someone to create two pictures of something they know well, like a dollar bill. The first is to be drawn, ‘as detailed as possible,’ from memory. Then, given a real dollar for comparison, the second is drawn using the bill as a reference.

In the article you can see the difference. The one from memory is quite simple, a rectangle with some numbers in the corner, a sketch of a head in the center, and some other basic details. The second one looks like a dollar bill, almost a photocopy!

How else does the brain store data?

The rest of Epstein’s article considers the predictive power of the IP model. It is well worth reading. It includes the idea of downloading our brain in the future to some artificial brain to live forever! It explains the vast sums of money spent in understanding the brain, such as in the failed European ‘Human Brain Project’ of 2013. It proposes a non-IP model to catch a baseball, which is remarkably similar to my ABC Radio program from 2000 on Ockham’s Razor when I explained the challenges of a brain moving to hit a golf ball correctly.

Let’s move on to how Patom theory explains a non-computational brain with a final message from Robert Epstein that should be applied to all science fiction:

“…we will never achieve immortality through downloading.”

Patom/Breakthrough Representation

A pattern atom model (patom) starts with the need to recognize things ‘instantly’ for the sake of survival. We need the scent of a predator to be directly associated with the full idea of the predator. Equally, any sense must identify the predator - sight, hearing, touch and even the feel of the ground shaking as it moves! This is animal level brain function that human brains extended through evolution.

In a computer, because any information can be stored in any memory location, a search index and algorithm is needed to convert from one encoded representation to another. But as justified above, there is no capability for a brain to do this, since it is too slow and, worse, it doesn’t have symbolic representations like a .jpg or .mp4.

A constraint to do away with search is to compel the system to only store any pattern only once. This is the pattern-atom concept in that a pattern is atomic inside the system, a unique match for something. For efficiency, patterns are decomposed into their constituent parts to ensure regions don’t run out of space. To re-use a pattern, the use of a hierarchy is introduced. When a pattern is matched, it signals its match to any up-stream pattern. To reinforce the total accuracy of a pattern, the up-stream patterns signal back that a subsequent match has been made.

This hierarchy with feedback introduces the concept of hierarchical, bidirectional memory.

Symbolic, but decomposed

In the pattern-atom model described above, patterns are first recognized at the sensory level that signal matches to higher levels that, in turn, signal back with larger matches of combinations. This aligns with the concept of bottom-up matching (we build up a model of what we experience) and also top-down matching (we recognize what we have previously seen ahead of new patterns).

More importantly, it enables our observations of brain function and damage to be realized inside our model.

By combining senses together for individual objects, a brain recognizes any modality of an object’s impressions. Just as you can recognize your mother’s voice as belonging to her as easily as recognition of her face, body or clothing.

The 100-step rule

The 100-step rule requires recognition of objects and choices of actions within 100 sequential neural activations. Assuming several brain regions per sense and several for multiples of senses, we can assume approximately 20 steps to recognize sensory input (with feedback and resolution applied for full consistency) and comparable sequences to match potential outcomes and comparable sequences to initial an appropriate action comprising muscle movements.

Recognition of patterns in a region needs to therefore take the time of only twenty or so sequential neuron activations.

David Hume’s Model

One of my many heroes from scientific history is David Hume. He made an important distinction in his observations. When our senses experience things, they are very strong. He called these experiences, impressions. But when we recall those experiences, they are weaker. He called those, ideas.

Hume’s model is useful in that an impression is recognized by the brain and it has access to the world directly through our sensors. While we are experiencing something, it can be compared with a copy, such as when we are drawing a copy of the dollar bill we are seeing. Impressions allow very detailed copying because we can continuously compare experience with our rendering of it.

Ideas enable our recall of previous impressions. As Epstein points out, the difference in recalled detail is fundamentally limited compared with impression detail.

Patom theory considers the brain as fundamentally a recognition machine, not a recall machine. The drawing from memory of a dollar bill highlights the key elements enabling the recognition of a dollar bill. We don’t have a .jpg file and recognize the objects in the world with sufficient detail for accuracy and speed.

Ideas indicate patterns

In the dollar bill recall example, people who have seen dollar bills many, many times are not able to accurately draw the image from memory. This suggests our brain doesn’t store a symbolic model of a dollar (like a .jpg file or image), but instead stores sufficient patterns to recognize new examples presented in impressions. This minimal pattern can be recalled and drawn.

But given an impression to copy (as artists used to do with human models for painting, for example), accurate rendering is possible, showing a brain’s ability to copy from a source sense and compare with that source’s target impression.

Humans are very good at copying as we see in speaking with the sounds we hear in a language over time, in mimicking what we see in gymnastics and what we see and drawn when learning how to write.

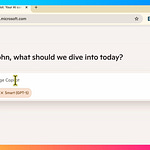

Linguistics indicates decomposition

My specialty since 2006 has been on emulating the brain to use human languages on computers. In the same way we see brain scans and damage reinforce the concept of sensory patterns being received in the cortex, then being refined, and again and leading to multi-sensory wholes (e.g. comprehension in a region like Wernicke’s), we see linguistics build up from sounds to phonemes, to words to phrases to propositions.

The strength of Patom theory when used in linguistics is fascinating, since it provides concrete examples of symbols being used in a hierarchy to rapidly communicate complex concepts with accuracy.

Are Pattern Atoms Symbols?

One of the take aways today is that Patom theory models a brain with hierarchical patterns that connect patterns within senses first, then together later, and then combinations of those after that.

The model means that there are no symbolic snapshots, like .jpgs, stored in a brain. Instead there are a minimal number of patterns that, in combination, represent a range of connected representations of an object.

Note that for complex objects in the world, no single view is adequate to represent it and for that reason a collection of sensory patterns can be connected at a higher level to resolve.

Conclusion

AI is being held back by the ‘information processing model.’ When humans are inside a paradigm, it is difficult to break free from it. This delay reflects the reality that once we learn something, we build on top of it, making it difficult to “change our mind” about it. As the famous scientist, Max Planck, is quoted as saying, “Science progresses one funeral at a time.”

The model presented today is initiated by a psychologist applying his trade and then reinforced with theoretical neuroscience to explain brain function.

The change in approach, in combination with a range of existing engineering solutions in language, supports the model of the non-IP brain—of Patom brain theory.

Next time we will look at the work of the famous author, Steven Pinker, whose linguistic information processing / “IP-based” models can be decomposed into non-IP models to help in answering the question of how linguistics works.

Do you want to get more involved?

If you want to get involved with our upcoming project to enable a gamified language-learning system, the site to track progress is here (click). You can keep informed by putting your email to the contact list or add a small deposit to become a VIP.

Do you want to read more?

If you want to read about the application of brain science to the problems of AI, you can read my latest book, “How to Solve AI with Our Brain: The Final Frontier in Science” to explain the facets of brain science we can apply and why the best analogy today is the brain as a pattern-matcher. The book link is here on Amazon in the US (and elsewhere).

In the cover design below, you can see the human brain incorporating its senses, such as the eyes. The brain’s use is being applied to a human-like robot who is being improved with brain science towards full human emulation in looks and capability.